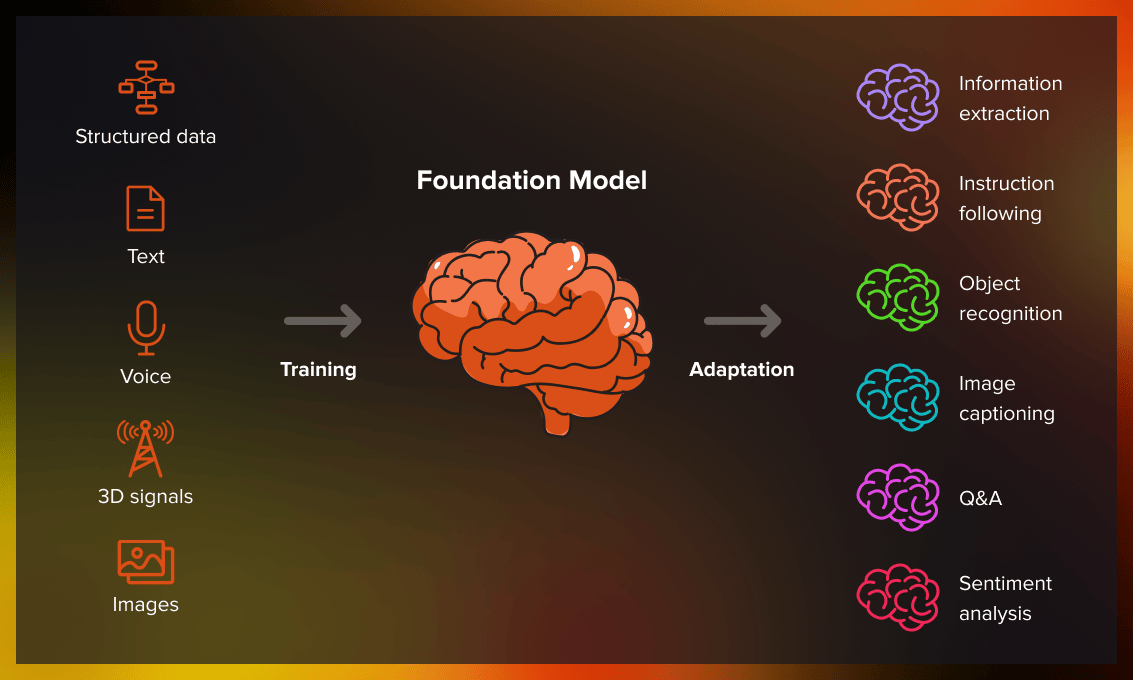

The way artificial intelligence is built and deployed has changed significantly over the past few years. Rather than training separate models for each specific task, researchers and engineers now develop large, general-purpose systems capable of handling a wide range of applications. These are called foundation models.

A foundation model is a large-scale AI system trained on broad, diverse datasets using self-supervised or semi-supervised learning techniques. Once trained, it can be adapted to numerous downstream tasks — from text generation and image recognition to code writing and question answering. For anyone pursuing gen ai training in Hyderabad or elsewhere, understanding foundation models is not optional. It is central to how modern AI systems are designed and deployed.

What Makes a Model a “Foundation Model”?

The term “foundation model” was introduced by researchers at Stanford University in 2021. It describes models that serve as a base — a foundation — upon which more specialized applications are built.

Three characteristics define these models:

Scale: Foundation models are trained on massive datasets containing billions or even trillions of tokens. This scale gives them a broad understanding of language, patterns, and relationships across domains.

Generality: Unlike traditional models designed for one task, foundation models learn representations that transfer across tasks. The same base model can be adapted for summarization, classification, translation, or code generation.

Adaptability: Through a process called fine-tuning or prompting, foundation models can be customized for specific use cases without being rebuilt from scratch. This makes them highly efficient for organizations deploying AI at scale.

Examples of well-known foundation models include GPT-4, Claude, Gemini, LLaMA, and BERT. Each of these has been trained on diverse data and is used across a variety of real-world applications.

How Foundation Models Are Trained

Training a foundation model requires enormous computational resources and carefully curated data. The process typically involves the following stages:

Pre-training is the first and most resource-intensive phase. The model is exposed to large corpora of text, images, code, or multimodal data. During this phase, it learns general patterns, grammar, facts, reasoning structures, and contextual relationships through objectives like predicting the next token or reconstructing masked inputs.

Fine-tuning comes next. Once pre-training is complete, the model is trained further on a smaller, task-specific dataset. This adjusts its general knowledge toward a particular domain or application — such as medical diagnosis, legal document review, or customer support.

Reinforcement Learning from Human Feedback (RLHF) is increasingly used to align model outputs with human preferences. Human evaluators rank model responses, and this feedback is used to improve the model’s behavior over time.

This training pipeline is a core topic in structured gen ai training in Hyderabad programs, where learners gain hands-on exposure to how these systems are built, evaluated, and deployed in production environments.

Applications Across Industries

One of the most significant advantages of foundation models is their versatility. A single trained model can support multiple functions across different sectors.

Healthcare: Foundation models analyze clinical notes, assist in medical coding, and support diagnostic workflows by processing large volumes of unstructured medical text.

Finance: These models power fraud detection systems, generate financial summaries, and assist analysts by extracting insights from earnings reports and regulatory filings.

Education: AI tutoring platforms built on foundation models can answer student questions, generate practice problems, and explain complex concepts in simple terms.

Software development: Models like GitHub Copilot — built on foundation model technology — assist developers by suggesting code completions, identifying bugs, and generating documentation.

Legal and compliance: Foundation models can review contracts, flag inconsistencies, and summarize lengthy legal documents with reasonable accuracy.

The breadth of these applications explains why organizations across every sector are investing in teams with expertise in foundation model architecture. This demand is also why gen ai training in Hyderabad has seen growing enrollment from professionals across IT, healthcare, finance, and education.

Challenges in Working with Foundation Models

Despite their capabilities, foundation models come with real limitations that practitioners must understand.

Hallucination remains a persistent issue. Models sometimes generate confident-sounding but factually incorrect outputs. This requires careful validation in high-stakes applications.

Bias is another concern. Because these models are trained on data from the internet and other large sources, they can inherit and amplify societal biases present in that data.

Cost and compute requirements are substantial. Training foundation models from scratch is beyond the reach of most organizations, which is why fine-tuning existing open-source or commercial models has become the more practical approach.

Interpretability is limited. Understanding why a foundation model produces a specific output remains a research challenge, which complicates deployment in regulated industries.

Conclusion

Foundation models represent a fundamental shift in how AI is built. By training once on diverse data and adapting to many tasks, they offer scalability and efficiency that earlier approaches could not match. As these models become more embedded in enterprise workflows, the ability to work with, fine-tune, and evaluate them becomes a critical professional skill. For learners seeking a structured path into this field, pursuing gen ai training in Hyderabad offers a practical entry point into one of the most important technologies shaping the decade ahead.